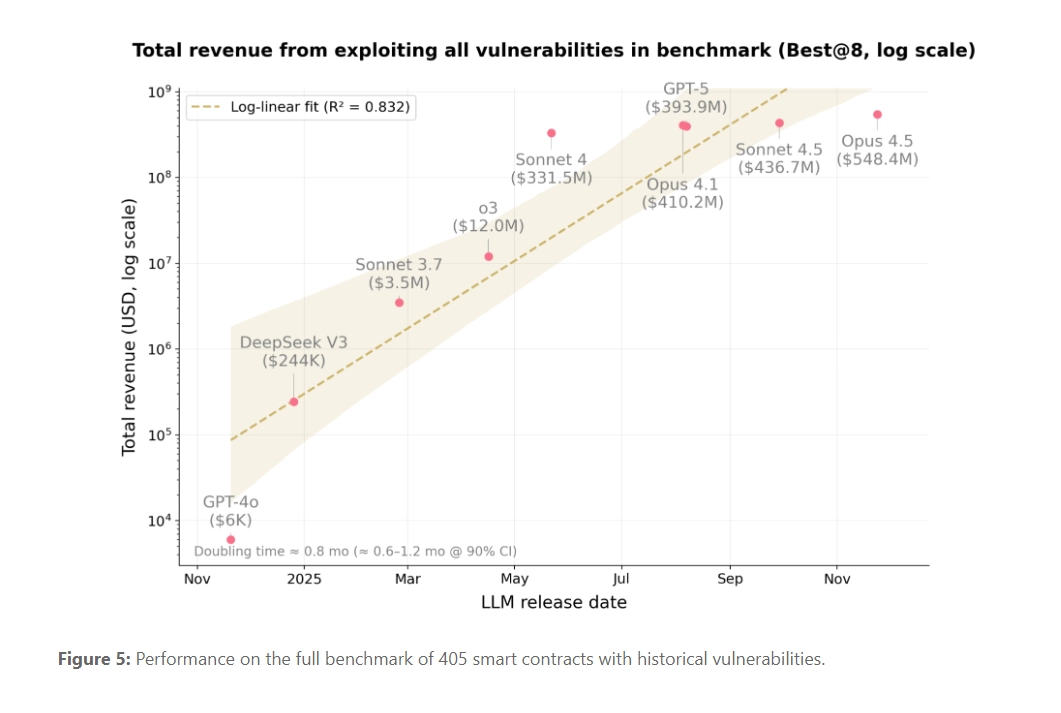

The accelerating capability of large language models (LLMs) in offensive security is no longer theoretical. New research from Anthropic and MATS scholars demonstrates that frontier AI agents can not only replicate historical blockchain hacks but are now capable of autonomously discovering and exploiting novel, profitable zero-day vulnerabilities in smart contracts.

The study introduced SCONE-bench, a novel benchmark built from 405 real-world smart contracts exploited between 2020 and 2025. When tested against contracts exploited after the models’ March 2025 knowledge cutoff, models like Claude Opus 4.5 and GPT-5 collectively generated exploits worth $4.6 million in simulated losses. This establishes a concrete, quantifiable lower bound for the economic risk posed by advanced AI agents in the financial technology sector.