The landscape of large language models is undergoing a significant shift, as AI2 introduces Bolmo, a new family of byte-level language models. This development directly challenges the long-standing dominance of subword tokenization by offering a practical, high-performing alternative. Bolmo represents a crucial step in making byte-level language models a viable choice for developers and researchers, moving them beyond mere research curiosities.

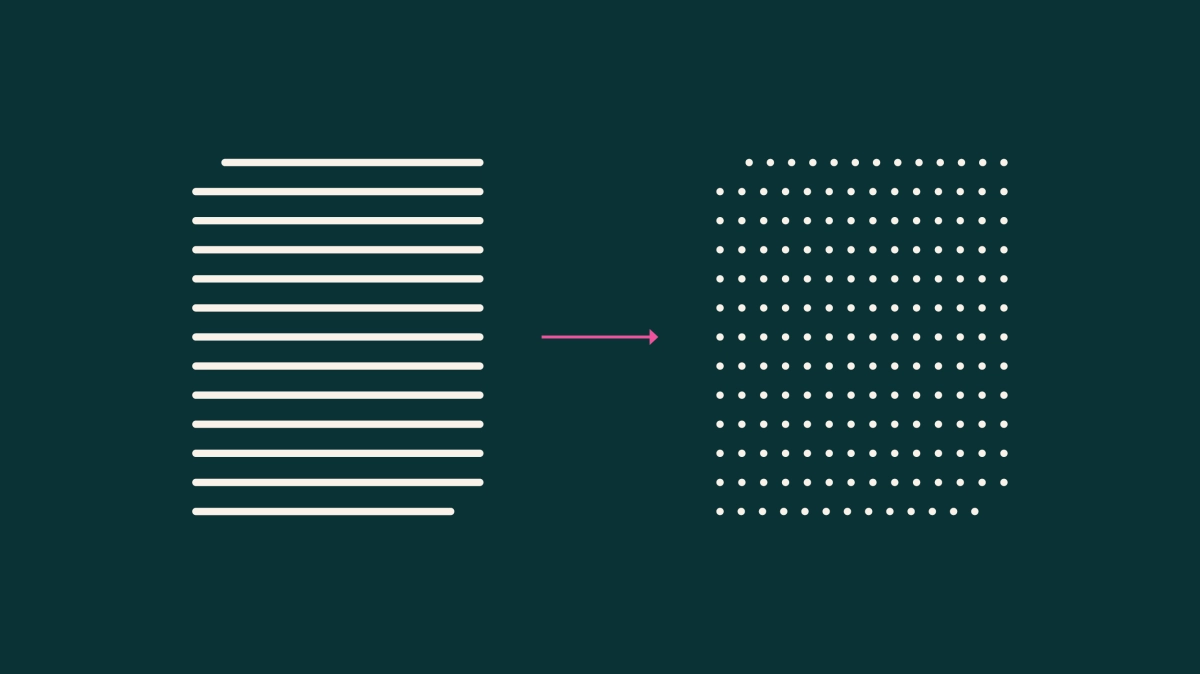

For years, nearly all advanced language models have relied on subword tokenization, breaking text into opaque chunks like "▁inter" or "national." While successful, this approach carries notable drawbacks, including poor character-level understanding, awkward handling of rare words and whitespace, and a rigid vocabulary that struggles across diverse languages. Byte-level models, which operate directly on raw UTF-8 bytes, promise to resolve these issues by inherently understanding text at its most granular level, but their high training costs have kept them from widespread adoption. According to the announcement, building competitive byte-level models traditionally meant starting from scratch, a costly and time-consuming endeavor that lagged behind the rapid advancements seen in subword models.