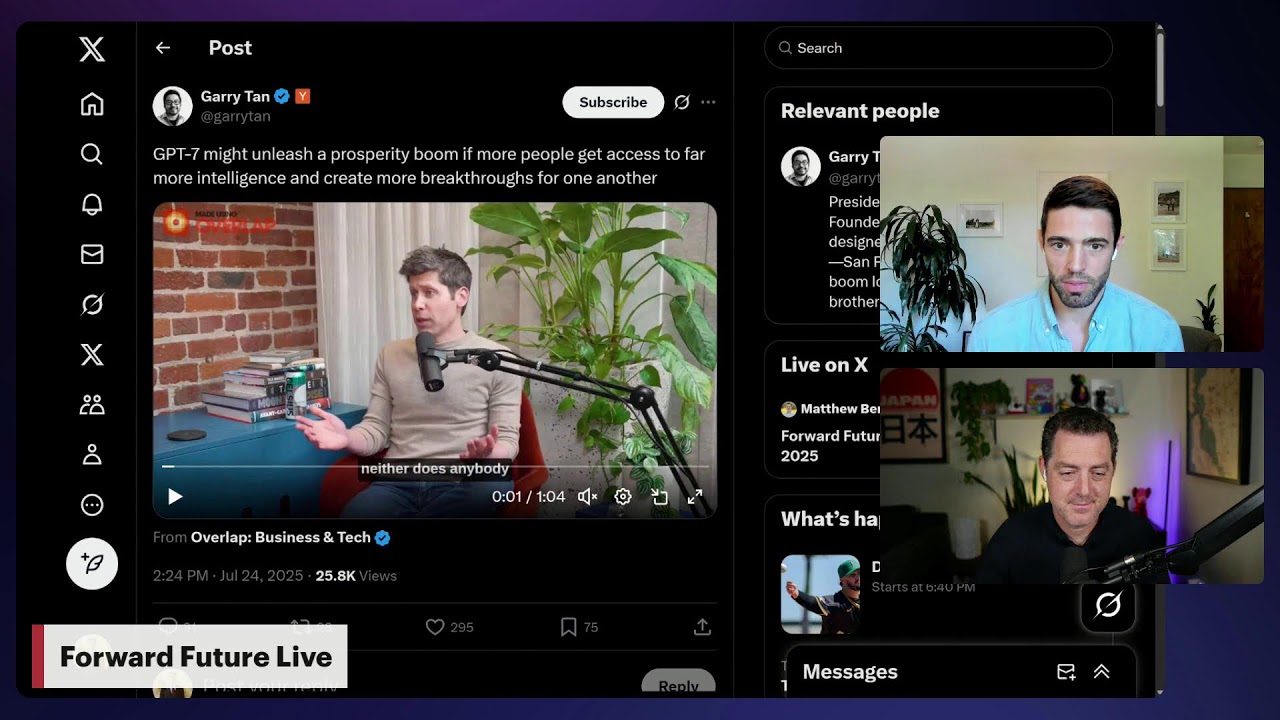

The relentless pursuit of scale in artificial intelligence, once the primary driver of breakthroughs, is giving way to a more nuanced frontier. This was a central theme as Matthew Berman hosted Nathan Benaich, General Partner at Air Street Capital, for a "Forward Future Live" discussion, dissecting the evolving landscape of AI development and investment. Their conversation pivoted sharply from the conventional wisdom of "scaling laws" to the critical importance of novel architectures and meticulously curated data.

Benaich articulated a sentiment gaining traction across the AI research community and venture capital ecosystem: "The era of just purely scaling up models and getting performance for free is largely behind us." This assertion marks a significant inflection point. For years, simply increasing parameter counts and training data size yielded predictable performance gains, fueling the rise of massive foundation models. However, the discussion illuminated that this low-hanging fruit has largely been harvested, necessitating a strategic shift in how progress is conceived and pursued.