The Allen Institute for AI (AI2) has released Molmo2 VLM, a powerful new family of open-weight vision-language models that directly challenge proprietary systems like Gemini 3 Pro by mastering precise video grounding and tracking.

The strongest video-language models (VLMs) have long been locked behind corporate walls. While Google, OpenAI, and Anthropic battle over closed weights and proprietary data, the open-source community has struggled to build competitive foundations, often relying on synthetic data distilled from those very closed systems.

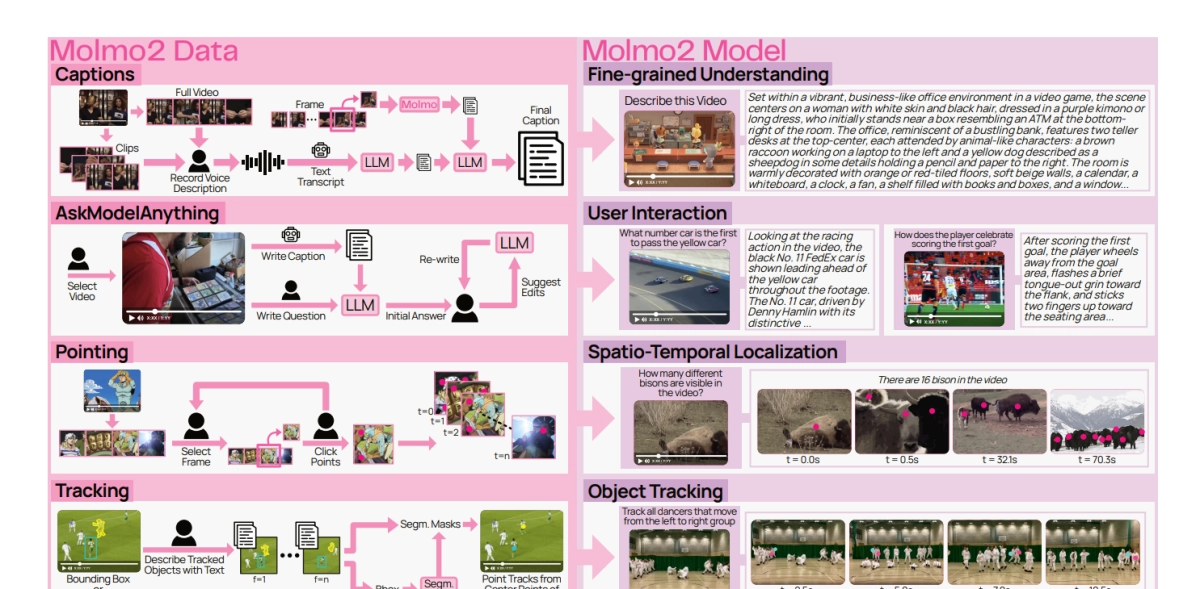

That dynamic just changed. The Allen Institute for AI (AI2) has released Molmo2 VLM, a new family of fully open-weight models that not only match the state of the art among open VLMs but demonstrate exceptional capabilities in video grounding that, on specific benchmarks, surpass even proprietary giants.

Molmo2 VLM is a direct shot across the bow of models like Gemini, focusing on a critical missing piece of multimodal AI: precise spatio-temporal grounding.

Grounding is the ability for a model to not just understand a video conceptually, but to pinpoint exactly *where* and *when* an object or event occurs in pixels and time. For applications ranging from robotics and industrial automation to advanced video search and accessibility, this capability is non-negotiable.