For the past year, the artificial intelligence narrative has been dominated by the cinematic spectacle of Sora—the ability to generate hyper-realistic video clips from a single text prompt. But for game developers, that technology has remained a beautiful, frustrating window: you can look, but you can’t touch. The core issue is state. Games demand interaction, and passive video models simply cannot handle the fundamental requirement of a live, stateful simulation.

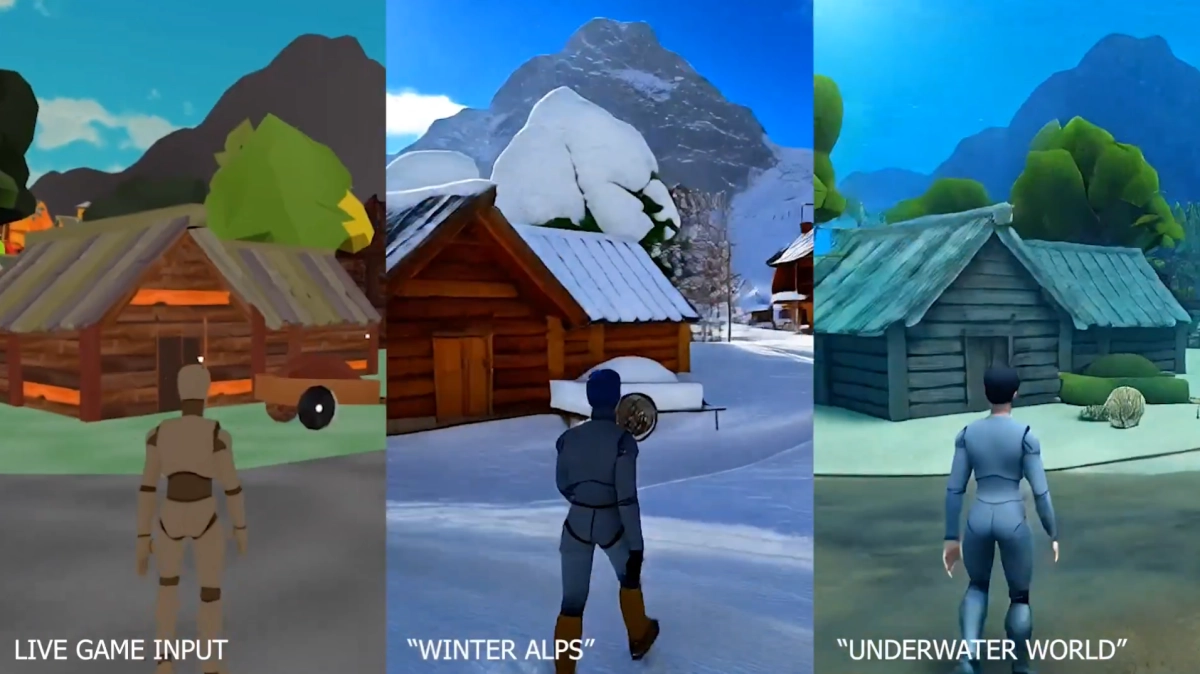

Enter Moonlake. The frontier research lab has officially unveiled Reverie, a game-native diffusion model that aims to move generative AI from producing passive sequences to driving live, playable worlds. If Moonlake succeeds, we aren’t just looking at better graphics; we’re looking at the birth of "vibe coding"—a world where interactive environments are dreamed into existence in real-time.

The reason traditional generative AI has struggled with gaming boils down to the latency wall. Games are simulations that run at "frame time." In a fast-paced action game, a delay of even 100 milliseconds between a button press and a visual reaction is enough to break immersion and make the mechanics feel "mushy." Most generative models are computationally heavy, taking seconds or even minutes to render a single sequence.