Clarifai, an AI platform company with roots stretching back to 2013, is making a bold new claim: its software can make standard GPUs run large language models faster than specialized, non-GPU hardware. The company today launched the Clarifai Reasoning Engine, an inference optimization layer designed specifically for the demanding, token-hungry workloads of AI agents.

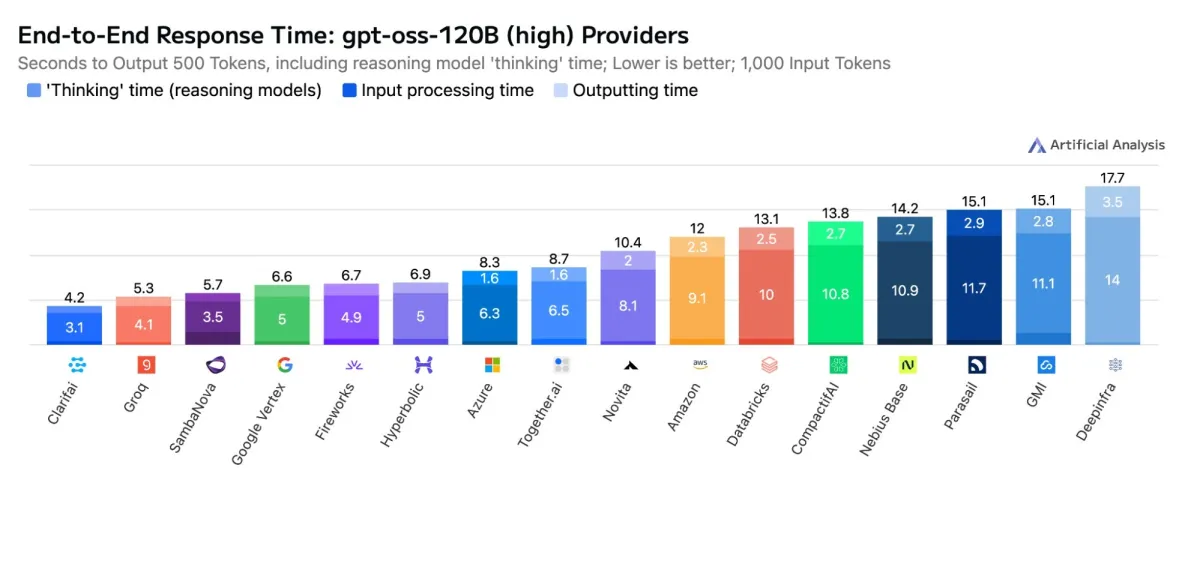

The announcement comes on the heels of new benchmarks from the independent firm Artificial Analysis. According to Clarifai, when running OpenAI’s 120-billion-parameter `gpt-oss` model, its new engine hit speeds of over 500 tokens per second with a time-to-first-token of just 0.3 seconds. These figures, Clarifai states, not only set new records for GPU-based inference but also surpassed the performance of some dedicated ASIC accelerators from other providers.