The quest for Artificial General Intelligence (AGI) has long been dominated by the advancements in large language models, yet a compelling new frontier is emerging: World Models. Matthew Berman recently showcased the groundbreaking capabilities of Marble from World Labs, a pioneering product developed under the stewardship of renowned AI researcher Dr. Fei-Fei Li. Their vision posits that true general intelligence hinges not on predicting the next word, but on comprehending and simulating the very fabric of reality.

Berman’s deep dive into Marble highlights a crucial paradigm shift in AI development. While most frontier labs currently concentrate on large language models (LLMs), Dr. Fei-Fei Li and her team at World Labs are championing a different path. As Berman articulates, "Fei-Fei Li and team think that world models are the way to artificial general intelligence, not large language models." This distinction is fundamental: LLMs excel at predicting the next token in a sequence, effectively generating coherent text. World Models, however, aim to build a mental model of the physical world, predicting its behavior, physics, and visual appearance.

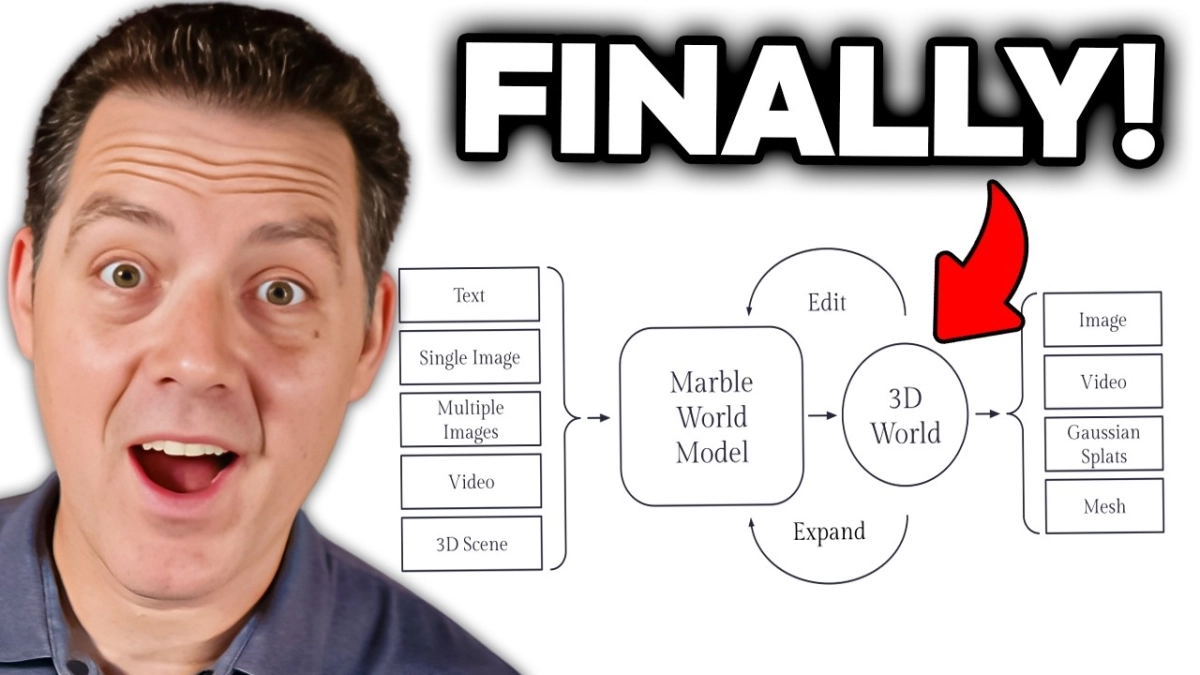

The core insight driving Marble is the inherent multimodality of human experience. We perceive and interact with the world through a rich tapestry of senses—sight, sound, touch, and language. This integrated understanding allows us to reason about and act within our environment. Marble seeks to imbue AI with a similar capacity, creating digital worlds that are not just visually rendered but are also inherently interactive and editable, paving the way for more sophisticated AI agents.