NVIDIA has once again swept the MLPerf Training v5.1 benchmarks, demonstrating unparalleled performance across critical AI workloads. The company's Blackwell Ultra architecture made a formidable debut, setting new records for large language model (LLM) training. This comprehensive victory underscores NVIDIA's continued leadership in scaling intelligence through hardware and software breakthroughs.

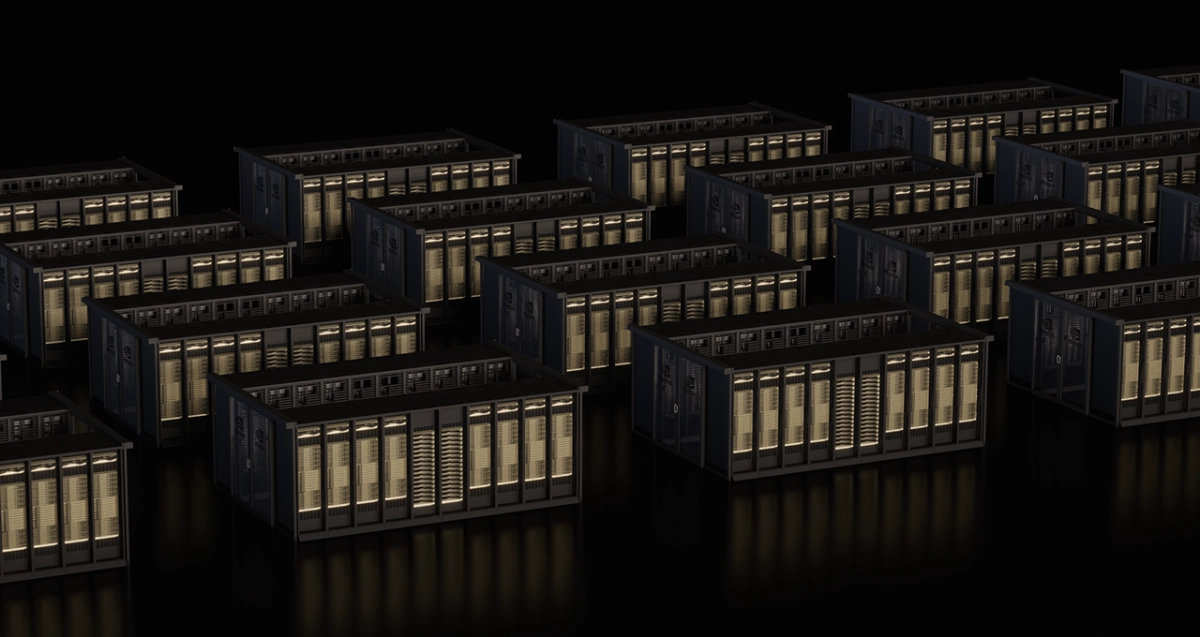

The GB300 NVL72 rack-scale system, powered by Blackwell Ultra, delivered significant generational leaps. It achieved over 4x faster Llama 3.1 405B pretraining and nearly 5x faster Llama 2 70B LoRA fine-tuning compared to the prior-generation Hopper architecture, using the same number of GPUs. These gains stem from Blackwell Ultra's architectural enhancements, including new Tensor Cores offering 15 petaflops of NVFP4 AI compute and 279GB of HBM3e memory. The NVIDIA Quantum-X800 InfiniBand platform also debuted, doubling scale-out networking bandwidth.